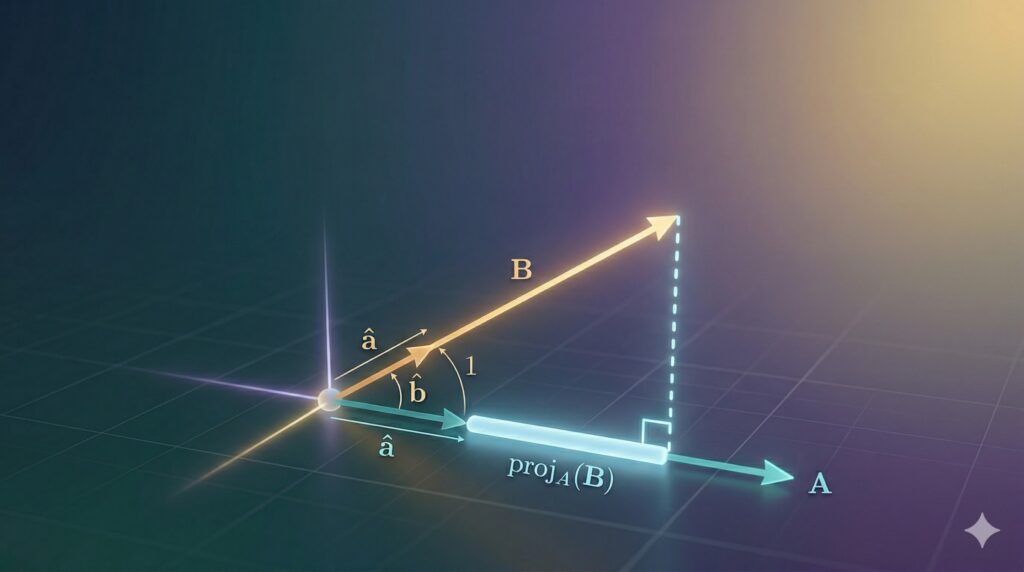

The Geometry Behind the Dot Product: Unit Vectors, Projections, and Intuition

This article is the first of three parts. Each part stands on its own, so you don’t need to read the others to understand it. The dot product is one of the most important operations in machine learning – but it’s hard to understand without the right geometric foundations. In this first part, we build …

The Geometry Behind the Dot Product: Unit Vectors, Projections, and Intuition Read More »