Image by Author

Contents

# Introduction

Most people who use artificial intelligence (AI) coding assistants today rely on cloud-based tools like Claude Code, GitHub Copilot, Cursor, and others. They are powerful, no doubt. But there is one huge trade-off hiding in plain sight: your code has to be sent to someone else’s servers in order for these tools to work.

That means every function, every application programming interface (API) key, every internal architecture choice is being transmitted to Anthropic, OpenAI, or another provider before you get your answer back. And even if they promise privacy, many teams simply cannot take that risk. Especially if you are working with:

- Proprietary or confidential codebases

- Enterprise client systems

- Research or government workloads

- Anything under a non-disclosure agreement (NDA)

This is where local, open-source coding models change the game.

Running your own AI model locally gives you control, privacy, and security. No code leaves your machine. No external logs. No “trust us.” And on top of that, if you already have capable hardware, you can save thousands on API and subscription costs.

In this article, we are going to walk through seven open-weight AI coding models that consistently score at the top of coding benchmarks and are rapidly becoming real alternatives to proprietary tools.

If you want the short version, scroll to the bottom for a quick comparison table of all seven models.

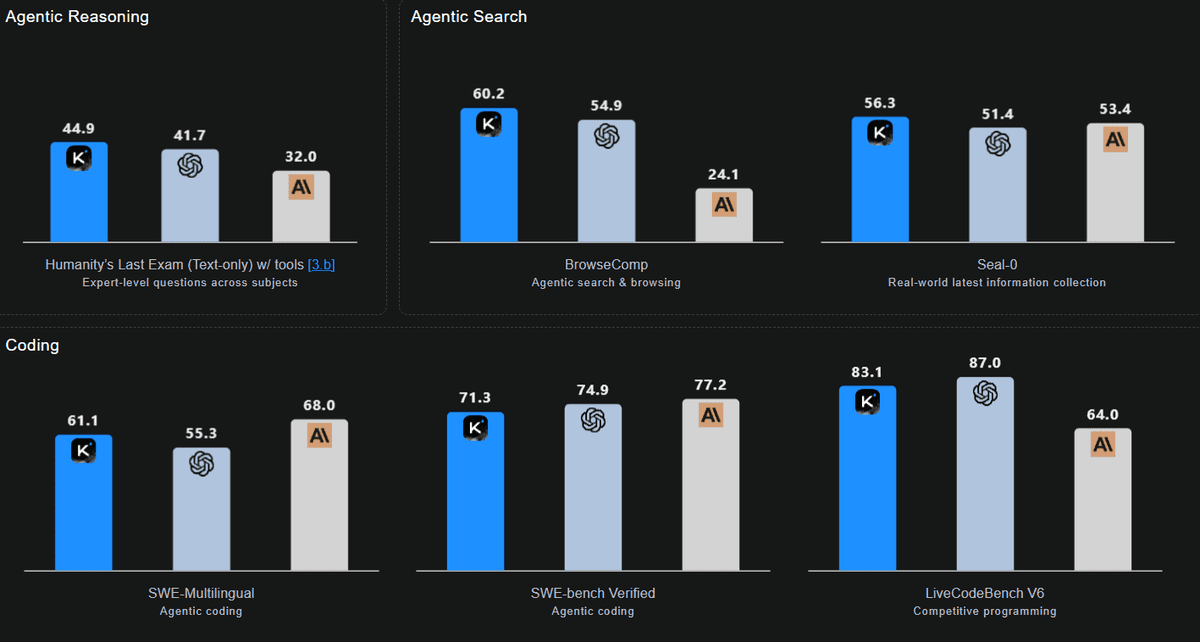

# 1. Kimi-K2-Thinking By Moonshot AI

Kimi-K2-Thinking, developed by Moonshot AI, is an advanced open-source thinking model designed as a tool-using agent that reasons step-by-step while dynamically invoking functions and services. It maintains stable long-horizon agency across 200 to 300 sequential tool calls — a significant improvement over the 30 to 50-step drift seen in previous systems. This enables autonomous workflows in research, coding, and writing.

Architecturally, K2 Thinking features a model with 1 trillion parameters, of which 32 billion are active. It includes 384 experts (with 8 selected per token and 1 shared), 61 layers (with 1 dense layer), and 7,168 attention dimensions with 64 heads. It uses MLA attention and SwiGLU activation. The model supports a context window of 256,000 tokens and has a vocabulary of 160,000. It is a native INT4 model that employs post-training quantization-aware training (QAT), resulting in approximately a 2× speed-up in low-latency mode while also reducing GPU memory usage.

Image by Author

In benchmark tests, K2 Thinking achieves impressive results, particularly in areas where long-horizon reasoning and tool use are critical. The coding performance is well-balanced, with scores such as SWE-bench Verified at 71.3, Multi-SWE at 41.9, SciCode at 44.8, and Terminal-Bench at 47.1. Its standout performance is evident in the LiveCodeBench V6, where it scored 83.1, demonstrating particular strengths in multilingual and agentic workflows.

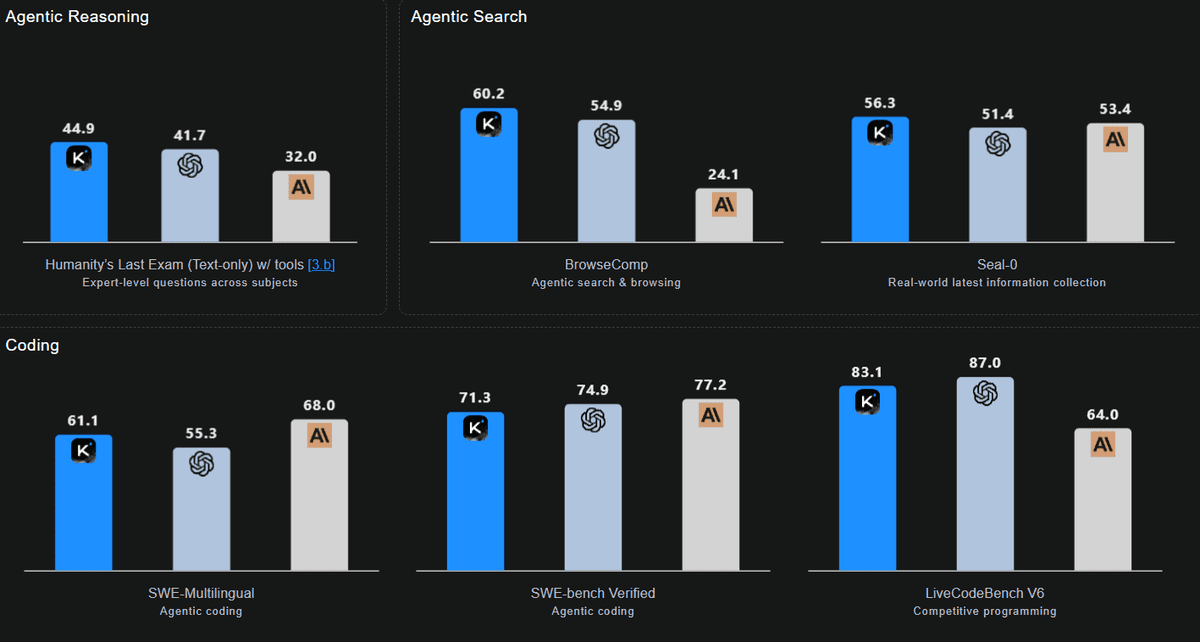

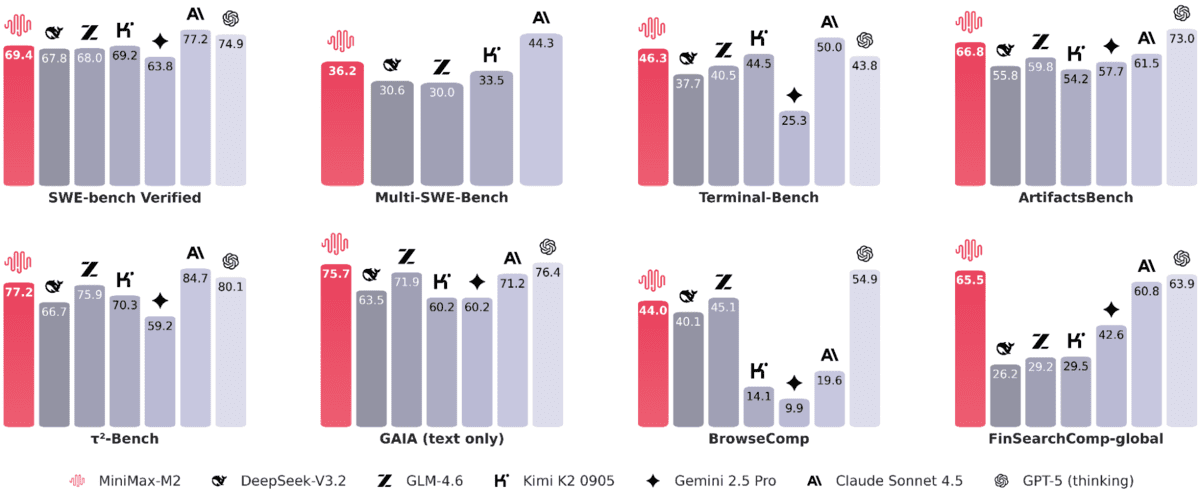

# 2. MiniMax‑M2 By MiniMaxAI

The MiniMax-M2 redefines efficiency for agent-based workflows. It is a compact, fast, and cost-effective Mixture of Experts (MoE) model featuring a total of 230 billion parameters, with only 10 billion activated per token. By routing the most relevant experts, MiniMax-M2 achieves end-to-end tool-use performance typically associated with larger models while reducing latency, cost, and memory usage. This makes it ideal for interactive agents and batched sampling.

Designed for elite coding and agent tasks without compromising general intelligence, it focuses on the plan → act → verify loops. These loops remain responsive due to the 10 billion activation footprint.

Image by Author

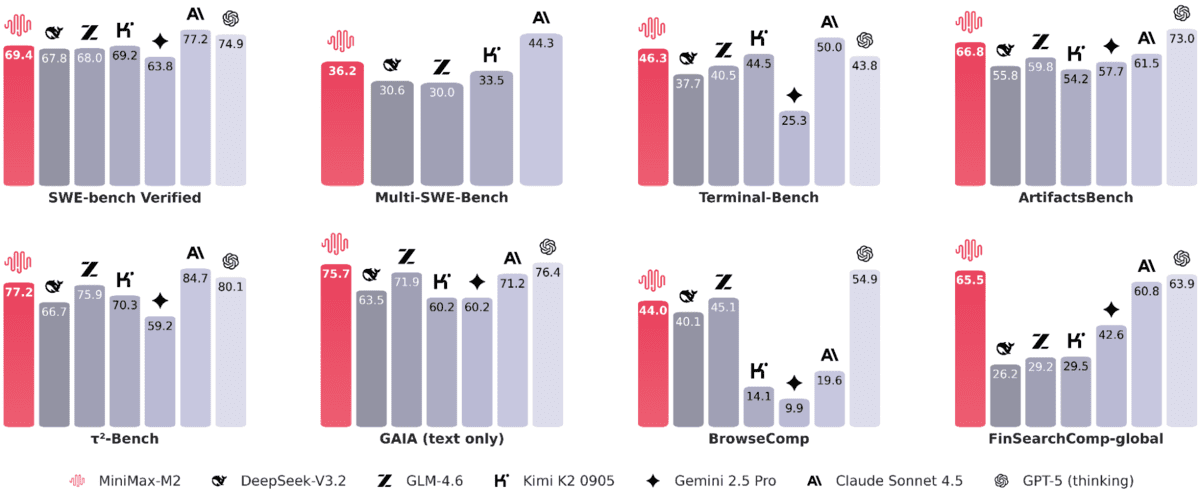

In real-world coding and agent benchmarks, the reported results demonstrate strong practical effectiveness: SWE-bench scored 69.4, Multi-SWE-Bench 36.2, SWE-bench Multilingual 56.5, Terminal-Bench 46.3, and ArtifactsBench 66.8. For web and research agents, the scores are as follows: BrowseComp 44 (with a score of 48.5 in Chinese), GAIA (text) 75.7, xbench-DeepSearch 72, τ²-Bench 77.2, HLE (with tools) 31.8, and FinSearchComp-global 65.5.

# 3. GPT‑OSS‑120B By OpenAI

GPT-OSS-120b is an open-weight MoE model designed for production use in general-purpose, high-reasoning workloads. It is optimized to run on a single 80GB GPU and features a total of 117 billion parameters, with 5.1 billion active parameters per token.

Key capabilities of GPT-OSS-120b include configurable reasoning effort levels (low, medium, high), full chain-of-thought access for debugging (not for end users), native agentic tools such as function calling, browsing, Python integration, and structured outputs, along with full fine-tuning support. Additionally, a smaller companion model, GPT-OSS-120b, is available for users requiring lower latency and tailored local/specialized applications.

Image by Author

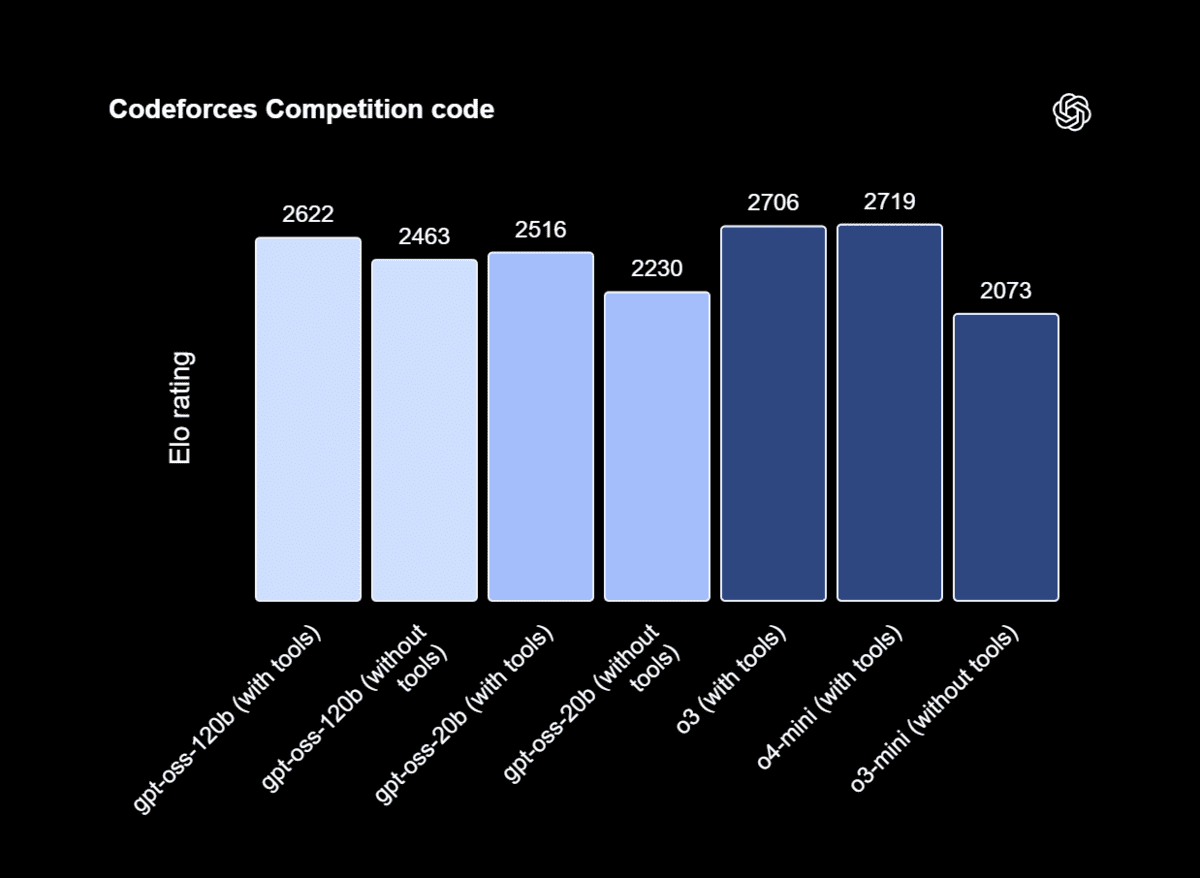

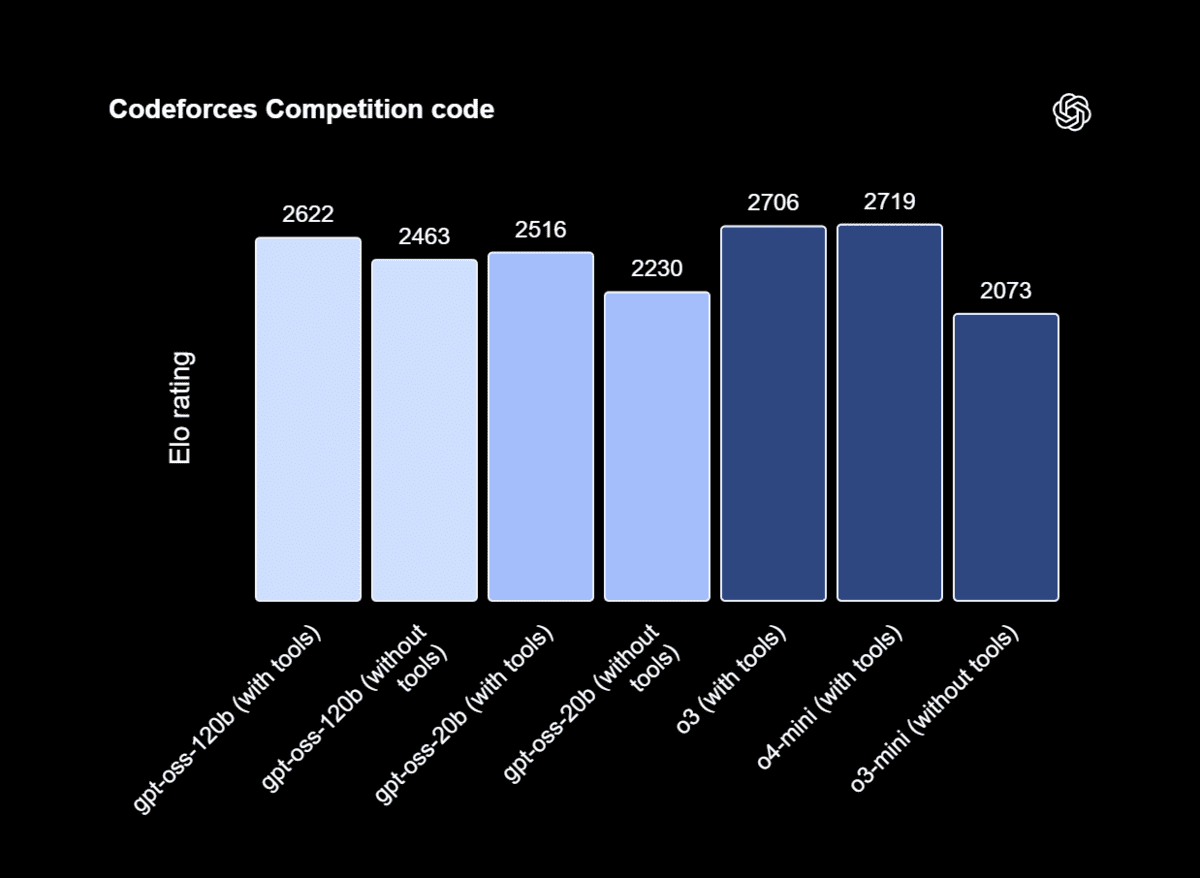

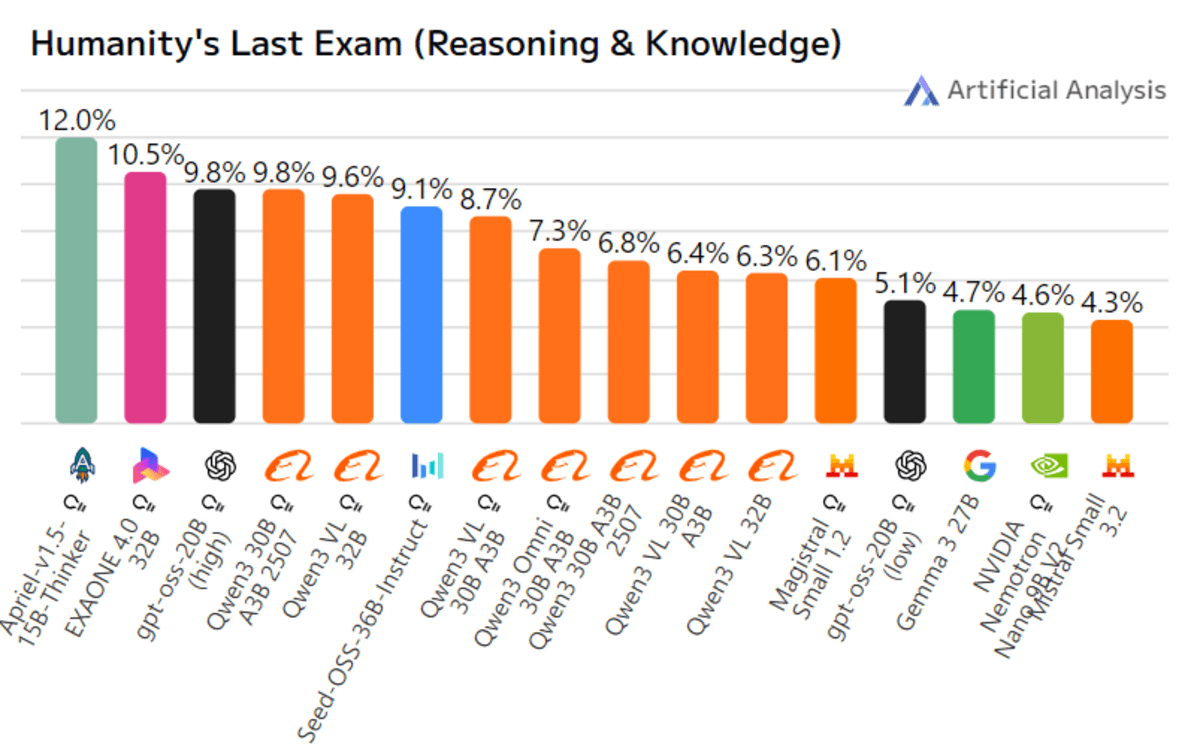

In external benchmarking, GPT-OSS-120b ranks as the third-highest model on the Artificial Analysis Intelligence Index. It demonstrates some of the best performance and speed relative to its size, based on Artificial Analysis’s cross-model comparisons of quality, output speed, and latency.

GPT-OSS-120b outperforms the o3-mini and matches or exceeds the capabilities of the o4-mini in areas such as competition coding (Codeforces), general problem solving (MMLU, HLE), and tool usage (TauBench). Furthermore, it surpasses the o4-mini in health assessments (HealthBench) and competition mathematics (AIME 2024 and 2025).

# 4. DeepSeek‑V3.2‑Exp By DeepSeek AI

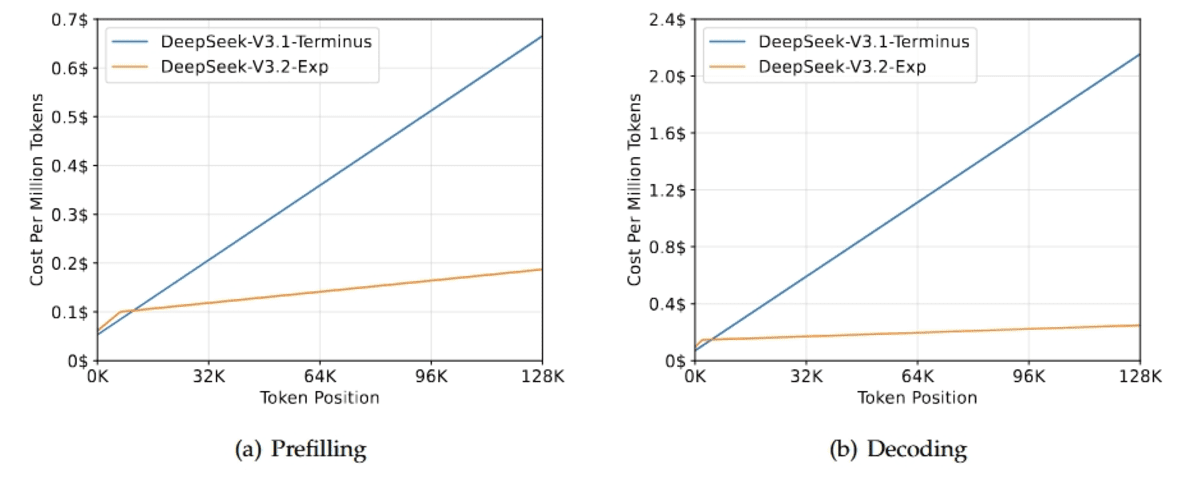

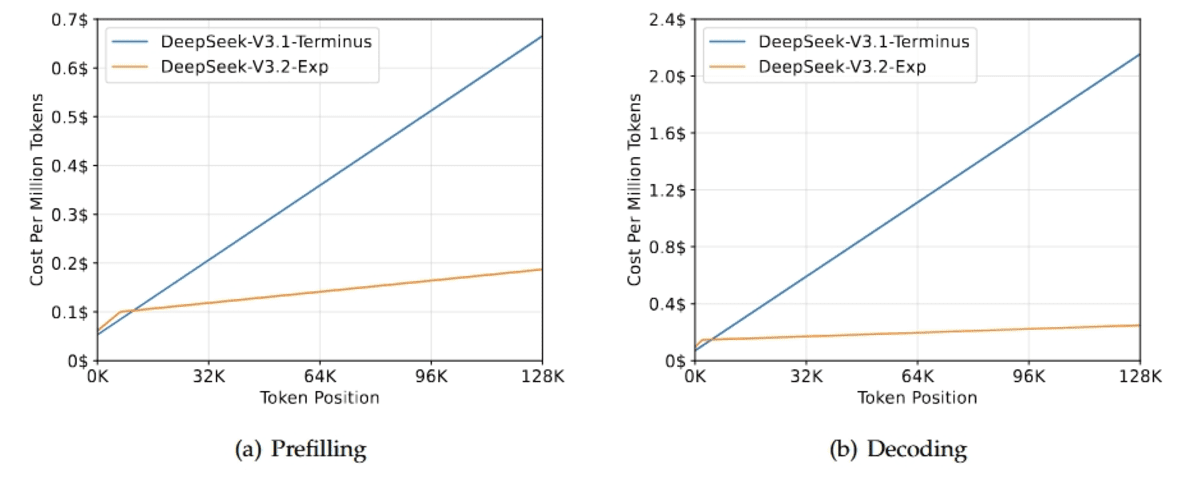

DeepSeek-V3.2-Exp is an experimental intermediate step toward the next generation of DeepSeek AI‘s architecture. It builds upon V3.1-Terminus and introduces DeepSeek Sparse Attention (DSA), a fine-grained sparse attention mechanism designed to enhance training and inference efficiency in long-context scenarios.

The primary focus of this release is to validate the efficiency gains for extended sequences while maintaining stable model behavior. To isolate the impact of DSA, the training configurations were intentionally aligned with those of V3.1. The results indicate that the output quality remains virtually identical.

Image by Author

Across public benchmarks, V3.2-Exp performs similarly to V3.1-Terminus, with minor shifts in performance: it matches MMLU-Pro at 85.0, achieves near parity on LiveCodeBench with approximately 74, shows slight differences on GPQA (79.9 compared to 80.7), and HLE (19.8 compared to 21.7). Additionally, there are gains on AIME 2025 (89.3 compared to 88.4) and Codeforces (2121 compared to 2046).

# 5. GLM‑4.6 By Z.ai

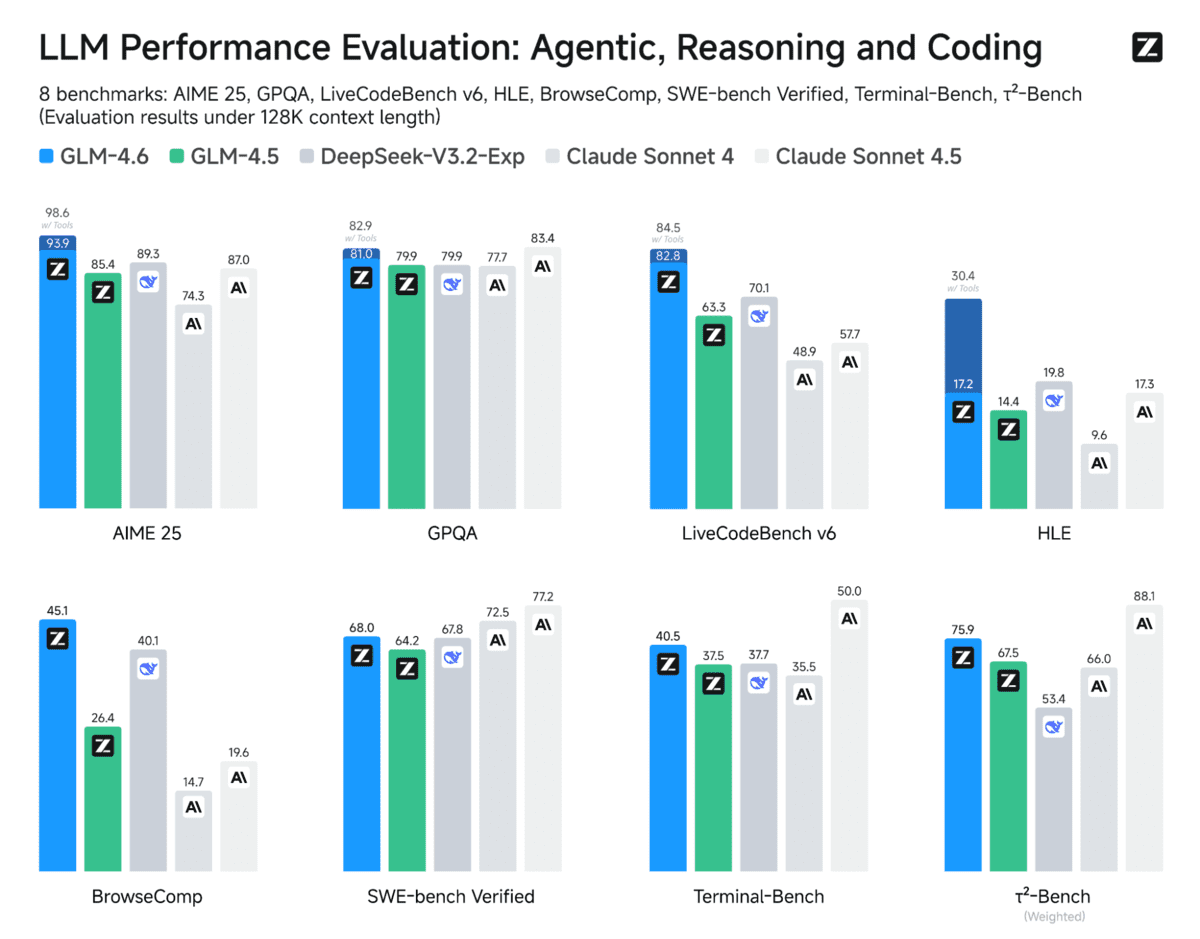

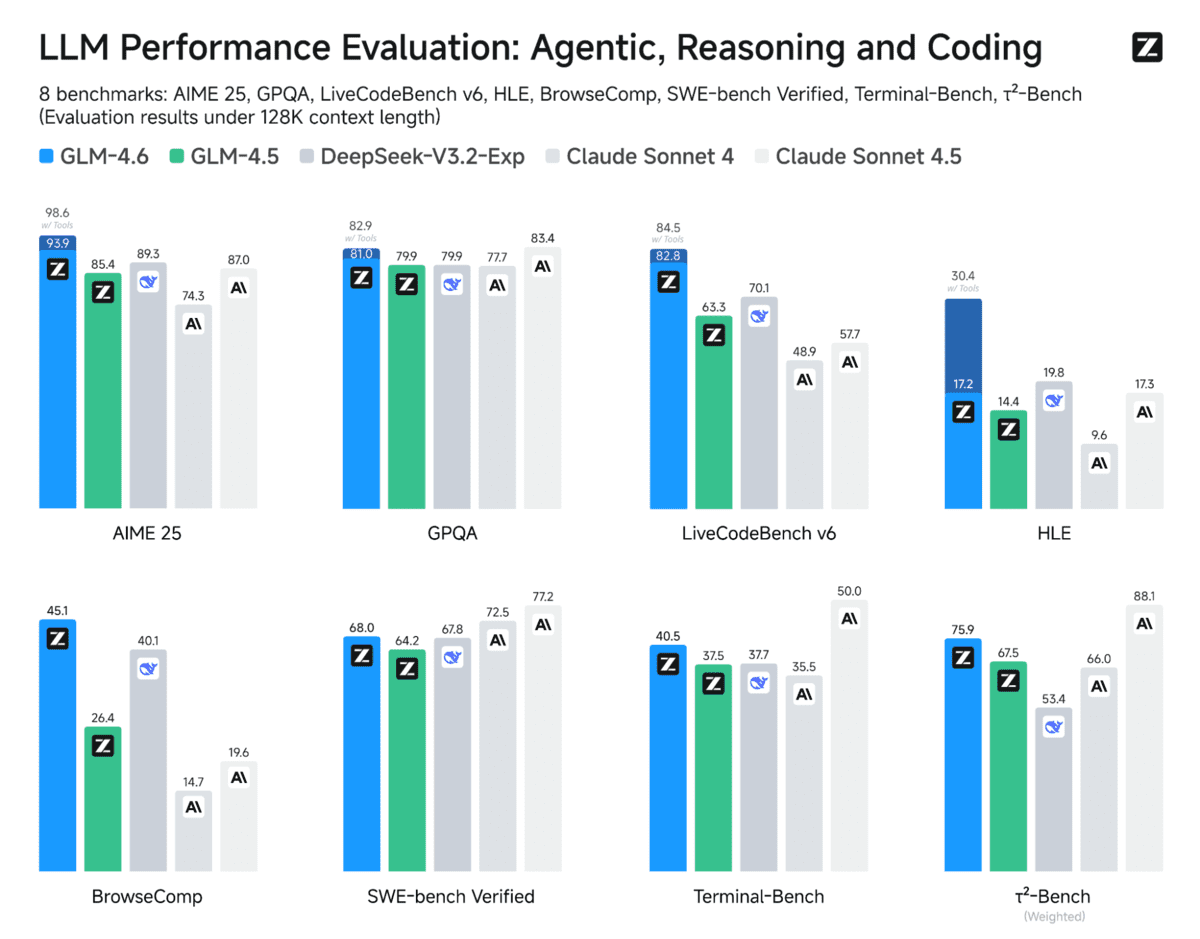

Compared to GLM‑4.5, GLM‑4.6 expands the context window from 128K to 200K tokens. This enhancement allows for more complex and long-horizon workflows without losing track of information.

GLM‑4.6 also offers superior coding performance, achieving higher scores on code benchmarks and delivering stronger real-world results in tools such as Claude Code, Cline, Roo Code, and Kilo Code, including more refined front-end generation.

Image by Author

Additionally, GLM‑4.6 introduces advanced reasoning capabilities with tool use during inference, which boosts its overall performance. This version features more capable agents with enhanced tool use and search-agent performance, as well as tighter integration within agent frameworks.

Across eight public benchmarks that cover agents, reasoning, and coding, GLM‑4.6 shows clear improvements over GLM‑4.5 and maintains competitive advantages compared to models such as DeepSeek‑V3.1‑Terminus and Claude Sonnet 4.

# 6. Qwen3‑235B‑A22B‑Instruct‑2507 By Alibaba Cloud

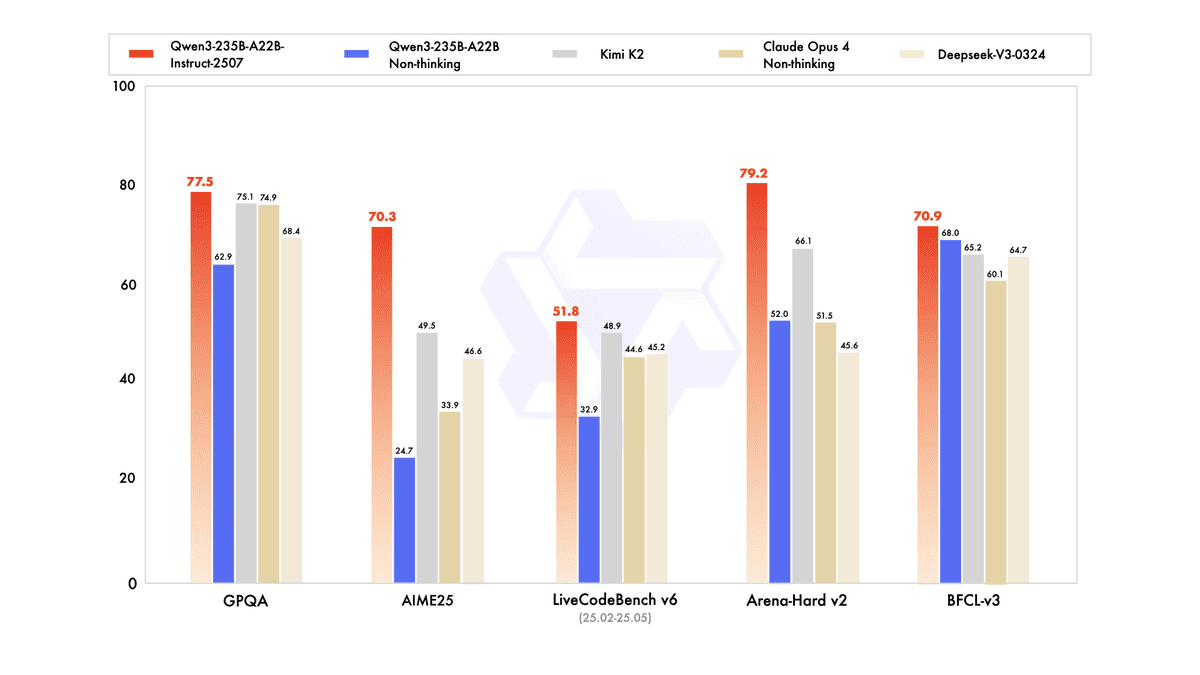

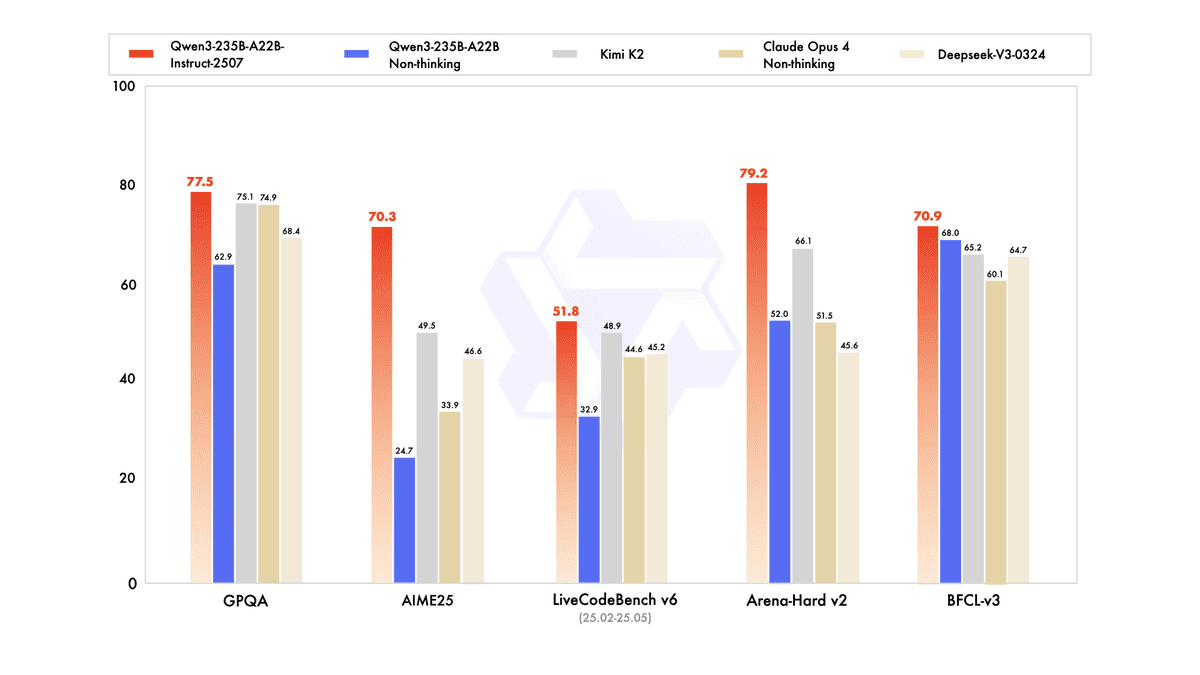

Qwen3-235B-A22B-Instruct-2507 is the non-thinking variant of Alibaba Cloud’s flagship model, designed for practical application without revealing its reasoning process. It offers significant upgrades in general capabilities, including instruction following, logical reasoning, mathematics, science, coding, and tool use. Additionally, it has made substantial advancements in long-tail knowledge across multiple languages and demonstrates improved alignment with user preferences for subjective and open-ended tasks.

As a non-thinking model, its primary goal is to generate direct answers rather than provide reasoning traces, focusing on helpfulness and high-quality text for everyday workflows.

Image by Author

In public evaluations related to agents, reasoning, and coding, it has shown clear improvements over previous releases and maintains a competitive edge over leading open-source and proprietary models (e.g., Kimi-K2, DeepSeek-V3-0324, and Claude-Opus4-Non-thinking), as noted by third-party reports.

# 7. Apriel‑1.5‑15B‑Thinker By ServiceNow‑AI

Apriel-1.5-15b-Thinker is ServiceNow AI’s multimodal reasoning model from the Apriel small language model (SLM) series. It introduces image reasoning capabilities in addition to the previous text model, highlighting a robust mid-training regimen that includes extensive continual pretraining on both text and images, followed by text-only supervised fine-tuning (SFT), without any image SFT or reinforcement learning (RL). Despite its compact size of 15 billion parameters, which allows it to run on a single GPU, it boasts a reported context length of approximately 131,000 tokens. This model aims for performance and efficiency comparable to much larger models, around ten times its size, especially on reasoning tasks.

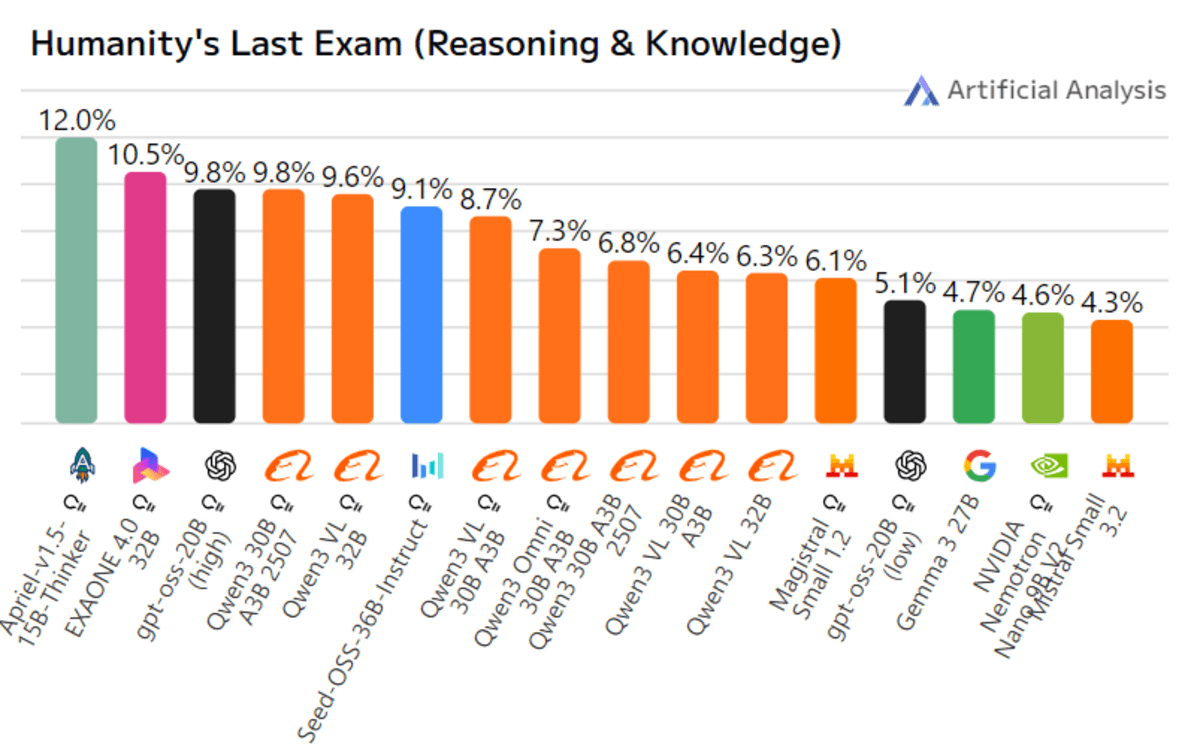

Image by Author

In public benchmarks, Apriel-1.5-15B-Thinker achieves a score of 52 on the Artificial Analysis Intelligence Index, making it competitive with models like DeepSeek-R1-0528 and Gemini-Flash. It is claimed to be at least one-tenth the size of any model scoring above 50. Additionally, it demonstrates strong performance as an enterprise agent, scoring 68 on the Tau2 Bench Telecom and 62 on IFBench.

# Summary Table

Here is a summary of the open-source model for your specific use case:

| Model | Size / Context | Key Strength | Best For |

|---|---|---|---|

| Kimi-K2-Thinking (MoonshotAI) |

1T / 32B active, 256K ctx | Stable long-horizon tool use (~200–300 calls); strong multilingual & agentic coding | Autonomous research/coding agents needing persistent planning |

| MiniMax-M2 (MiniMaxAI) |

230B / 10B active, 128k ctx | High efficiency + low latency for plan→act→verify loops | Scalable production agents where cost + speed matter |

| GPT-OSS-120B (OpenAI) |

117B / 5.1B active, 128k ctx | General high-reasoning with native tools; full fine-tuning | Enterprise/private deployments, competition coding, reliable tool use |

| DeepSeek-V3.2-Exp | 671B / 37B active, 128K ctx | DeepSeek Sparse Attention (DSA), efficient long-context inference | Development/research pipelines needing long-doc efficiency |

| GLM-4.6 (Z.ai) |

355B / 32B active, 200K ctx | Strong coding + reasoning; improved tool-use during inference | Coding copilots, agent frameworks, Claude Code style workflows |

| Qwen3-235B (Alibaba Cloud) |

235B, 256K ctx | High-quality direct answers; multilingual; tool use without chain-of-thought (CoT) output | Large-scale code generation & refactoring |

| Apriel-1.5-15B-Thinker (ServiceNow) |

15B, ~131K ctx | Compact multimodal (text+image) reasoning for enterprise | On-device/private cloud agents, DevOps automations |

Abid Ali Awan (@1abidaliawan) is a certified data scientist professional who loves building machine learning models. Currently, he is focusing on content creation and writing technical blogs on machine learning and data science technologies. Abid holds a Master’s degree in technology management and a bachelor’s degree in telecommunication engineering. His vision is to build an AI product using a graph neural network for students struggling with mental illness.