to be the state-of-the-art object detection algorithm, looked to become obsolete thanks to the appearance of other methods like SSD (Single Shot Multibox Detector), DSSD (Deconvolutional Single Shot Detector), and RetinaNet. Finally, after two years since the introduction of YOLOv2, the authors decided to improve the algorithm where they eventually came up with the next YOLO version reported in a paper titled “YOLOv3: An Incremental Improvement” [1]. As the title suggests, there were indeed not many things the authors improved upon YOLOv2 in terms of the underlying algorithm. But hey, when it comes to performance, it actually looks pretty impressive.

In this article I am going to talk about the modifications the authors made to YOLOv2 to create YOLOv3 and how to implement the model architecture from scratch with PyTorch. I highly recommend you reading my previous article about YOLOv1 [2, 3] and YOLOv2 [4] before this one, unless you already got a strong foundation in how these two earlier versions of YOLO work.

What Makes YOLOv3 Better Than YOLOv2

The Vanilla Darknet-53

The modification the authors made was mainly related to the architecture, in which they proposed a backbone model referred to as Darknet-53. See the detailed structure of this network in Figure 1. As the name suggests, this model is an improvement upon the Darknet-19 used in YOLOv2. If you count the number of layers in Darknet-53, you will find that this network consists of 52 convolution layers and a single fully-connected layer at the end. Keep in mind that later when we implement it on YOLOv3, we will feed it with images of size 416×416 rather than 256×256 as written in the figure.

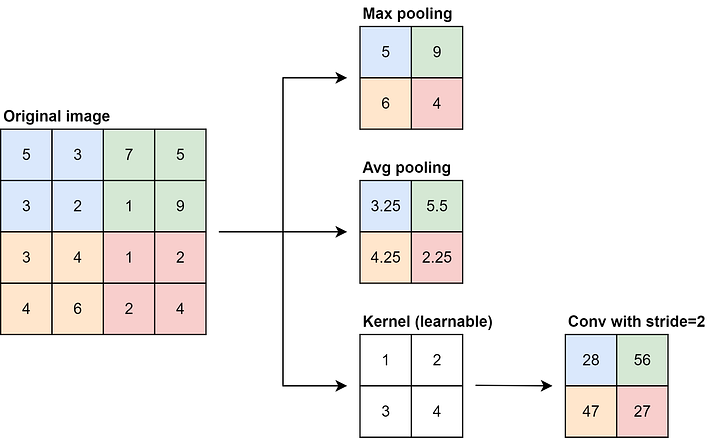

If you’re familiar with Darknet-19, you must remember that it performs spatial downsmapling using maxpooling operations after every stack of several convolution layers. In Darknet-53, authors replaced these pooling operations with convolutions of stride 2. This was essentially done because maxpooling layer completely ignores non-maximum numbers, causing us to lose a lot of information contained in the lower intensity pixels. We can actually use average-pooling as an alternative, but in theory, this approach won’t be optimal either because all pixels within the small region are weighted the same. So as a solution, authors decided to use convolution layer with a stride of 2, which by doing so the model will be able to reduce image resolution while capturing spatial information with specific weightings. You can see the illustration for this in Figure 2 below.

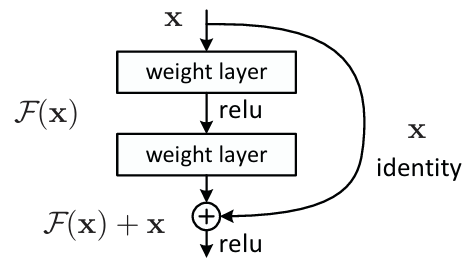

Next, the backbone of this YOLO version is now equipped with residual blocks which the idea is originated from ResNet. One thing that I want to emphasize regarding our implementation is the activation function within the residual block. You can see in Figure 3 below that according to the original ResNet paper, the second activation function is placed after the element-wise summation. However, based on the other tutorials that I read [6, 7], I found that in the case of YOLOv3 the second activation function is placed right after the weight layer instead (before summation). So later in the implementation, I decided to follow the guide in these tutorials since the YOLOv3 paper does not give any explanations about it.

Darknet-53 With Detection Heads

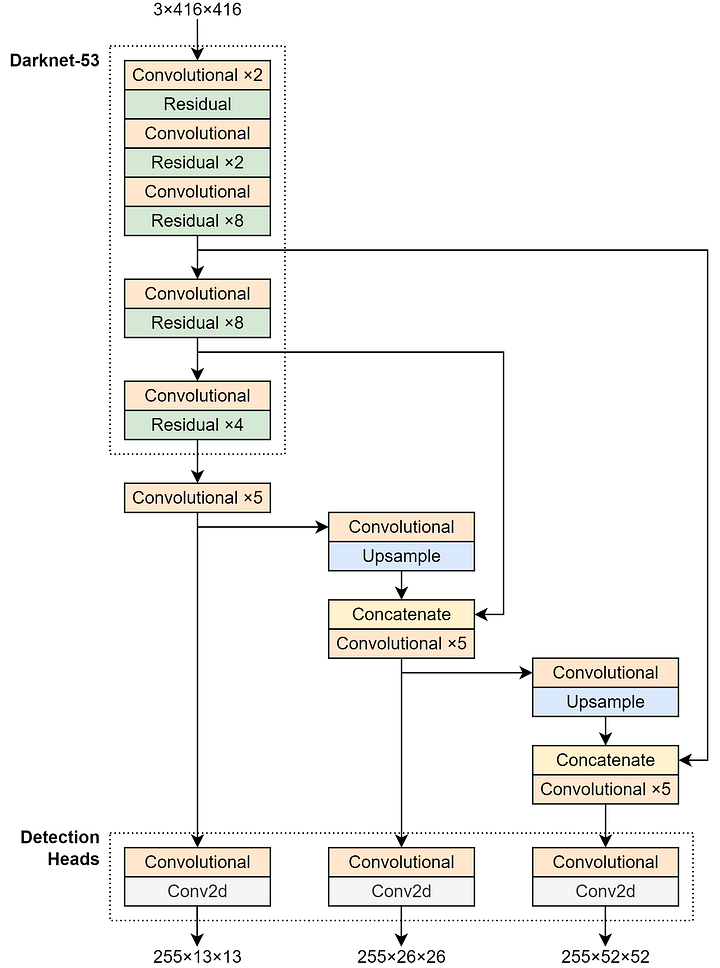

Keep in mind that the architecture in Figure 1 is only meant for classification. Thus, we need to replace everything after the last residual block if we want to make it compatible for detection tasks. Again, the original YOLOv3 paper also does not provide the detailed implementation guide, hence I decided to search for it and eventually got one from the paper referenced as [9]. I redraw the illustration from that paper to make the architecture looks clearer as shown in Figure 4 below.

There are actually lots of things to explain regarding the above architecture. Now let’s start from the part I refer to as the detection heads. Different from the previous YOLO versions which relied on a single head, here in YOLOv3 we have 2 additional heads. Thus, we will later have 3 prediction tensors for every single input image. These three detection heads have different specializations: the leftmost head (13×13) is the one responsible to detect large objects, the middle head (26×26) is for detecting medium-sized objects, and the one on the right (52×52) is used to detect objects of small size. We can think of the 52×52 tensor as the feature map that contains the detailed representation of an image, hence is suitable to detect small objects. Conversely, the 13×13 prediction tensor is meant to detect large objects because of its lower spatial resolution which is effective at capturing the general shape of an object.

Still with the detection head, you can also see in Figure 4 that the three prediction tensors have 255 channels. To understand where this number comes from, we first need to know that each detection head has 3 prior boxes. Following the rule given in YOLOv2, each of these prior boxes is configured such that it can predict its own object category independently. With this mechanism, the feature vector of each grid cell can be obtained by computing B×(5+C), where B is the number of prior boxes, C is the number of object classes, and 5 is the xywh and the bounding box confidence (a.k.a. objectness). In the case of YOLOv3, each detection head has 3 prior boxes and 80 classes, assuming that we train it on 80-class COCO dataset. By plugging these numbers to the formula, we obtain 3×(5+80)=255 prediction values for a single grid cell.

In fact, using multi-head mechanism like this allows the model to detect more objects as compared to the earlier YOLO versions. Previously in YOLOv1, since an image is divided into 7×7 grid cells and each of those can predict 2 bounding boxes, hence there are 98 objects possible to be detected. Meanwhile in YOLOv2, an image is divided into 13×13 grid cells in which a single cell is capable of generating 5 bounding boxes, making YOLOv2 able to detect up to 845 objects within a single image. This essentially allows YOLOv2 to have a better recall than YOLOv1. In theory, YOLOv3 is potentially able to achieve an even higher recall, especially when tested on an image that contains a lot of objects thanks to the larger number of possible detections. We can calculate the number of maximum bounding boxes for a single image in YOLOv3 by computing (13×13×3) + (26×26×3) + (52×52×3) = 507 + 2028 + 8112 = 10647, where 13×13, 26×26, and 52×25 are the number of grid cells within each prediction tensor, while 3 is the number of prior boxes a single grid cell has.

We can also see in Figure 4 that there are two concatenation steps incorporated in the network, i.e., between the original Darknet-53 architecture and the detection heads. The objective of these steps is to combine information from the deeper layer with the one from the shallower layer. Combining information from different depths like this is important because when it comes to detecting smaller objects, we do need both a detailed spatial information (contained in the shallower layer) and a better semantic information (contained in the deeper layer). Keep in mind that the feature map from the deeper layer has a smaller spatial dimension, hence we need to expand it before actually doing the concatenation. This is essentially the reason that we need to place an upsampling layer right before we do the concatenation.

Multi-Label Classification

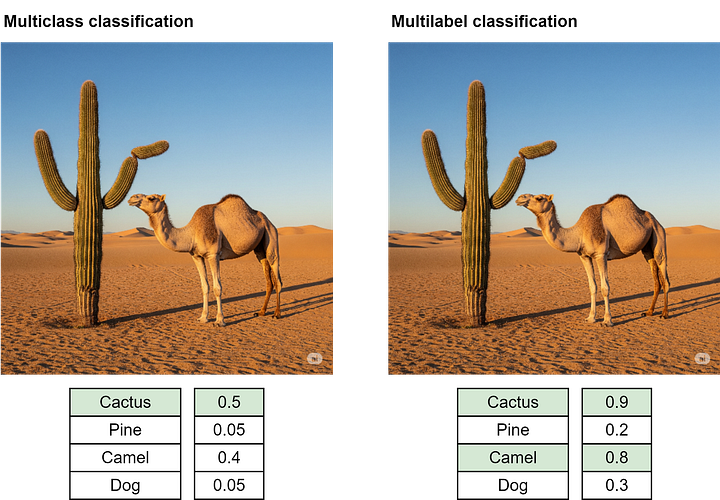

Apart from the architecture, the authors also changed the class labeling mechanism. Instead of using a standard multiclass classification paradigm, they proposed to use the so-called multilabel classification. If you’re not yet familiar with it, this is basically a method where an image can be assigned multiple labels at once. Take a look at Figure 5 below to better understand this idea. In this example, the image on the left belongs to the class person, athlete, runner, and man simultaneously. Later on, YOLOv3 is also expected to be able to make multiple class predictions on the same detected object.

In order for the model to predict multiple labels, we need to treat each class prediction output as an independent binary classifier. Look at Figure 6 below to see how multiclass classification differs from multilabel classification. The illustration on the left is a condition when we use a typical multiclass classification mechanism. Here you can see that the probabilities of all classes sum to 1 thanks to the nature of the softmax activation function within the output layer. In this example, since the class camel is predicted with the highest probability, then the final prediction would be camel regardless of how high the prediction confidence of the other classes is.

On the other hand, if we use multilabel classification, there is a possibility that the sum of all class prediction probabilities is greater than 1 because we use sigmoid activation function which by nature does not restrict the sum of all prediction confidence scores to 1. Thanks to this reason, later in the implementation we can simply apply a specific threshold to consider a class predicted. In the example below, if we assume that the threshold is 0.7, then the image will be predicted as both cactus and camel.

Modified Loss Function

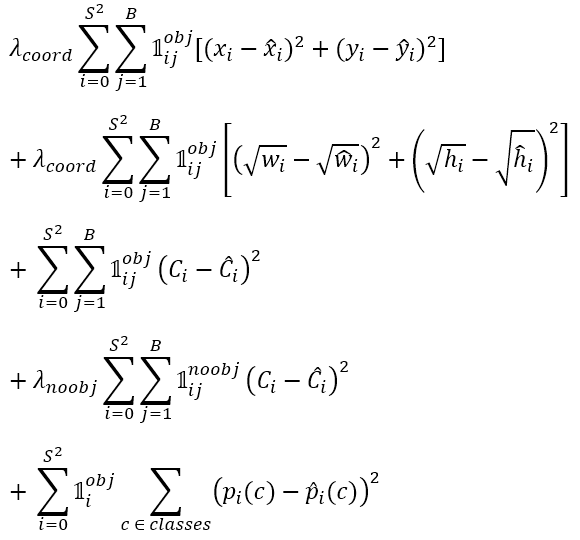

Another modification the authors made was related to the loss function. Now look at the loss function of YOLOv1 in Figure 7 below. As a refresher, the 1st and 2nd rows are responsible to compute the bounding box loss, the 3rd and 4th rows are for the objectness confidence loss, and the 5th row is for computing the classification loss. Remember that in YOLOv1 the authors used SSE (Sum of Squared Errors) in all these five rows to make things simple.

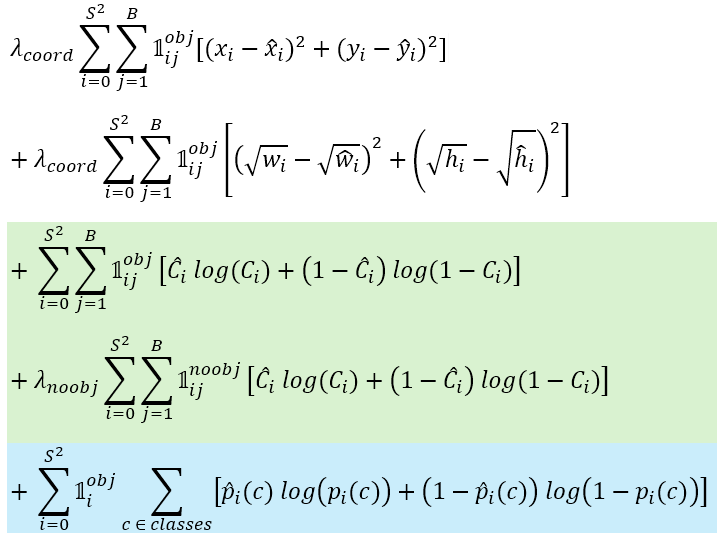

In YOLOv3, the authors decided to replace the objectness loss (the 3rd and 4th rows) with binary cross entropy, considering that the predictions corresponding to this part is only either 1 or 0, i.e., whether there is an object midpoint or not. Thus, it makes more sense to treat this as a binary classification rather than a regression problem.

Binary cross entropy will also be used in the classification loss (5th row). This is essentially because we use multilabel classification mechanism we discussed earlier, where we treat each of the output neuron as an independent binary classifier. Remember that if we were to perform a standard classification task, we typically need to set the loss function to categorical cross entropy instead.

Now below is what the loss function looks like after we replace the SSE with binary cross entropy for the objectness (green) and the multilabel classification (blue) parts. Note that this equation is created based on the YouTube tutorial I watched given at reference number [12] because, again, the authors do not explicitly provide the final loss function in the paper.

Some Experimental Results

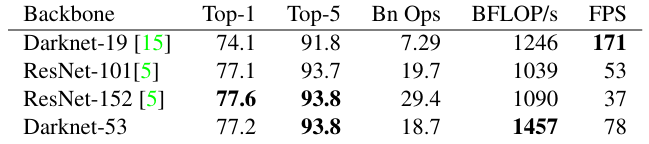

With all the modifications discussed above, the authors found that the improvement in performance is pretty impressive. The first experimental result I want to show you is related to the performance of the backbone model in classifying images on ImageNet dataset. You can see in Figure 9 below that the improvement from Darknet-19 (YOLOv2) to Darknet-53 (YOLOv3) is quite significant in terms of both the top-1 accuracy (74.1 to 77.2) and the top-5 accuracy (91.8 to 93.8). It is necessary to acknowledge that ResNet-101 and ResNet-152 indeed also perform as good as Darknet-53 in accuracy, but if we compare the FPS (measured on Nvidia Titan X), we can see that Darknet-53 is a lot faster than both ResNet variants.

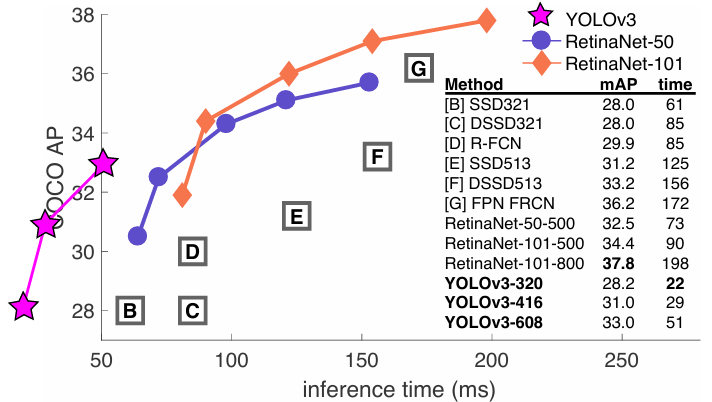

The similar behavior could also be observed on object detection task, where it is seen in Figure 10 that all YOLOv3 variants successfully obtained the fastest computation time among all other methods despite not having the best accuracy. You can see in the figure that the largest YOLOv3 variant is nearly 4 times faster than the largest RetinaNet variant (51 ms vs 198 ms). Moreover, the largest YOLOv3 variant itself already surpasses the mAP of the smallest RetinaNet variant (33.0 vs 32.5) while still having a faster inference time (51 ms vs 73 ms). These experimental results essentially prove that YOLOv3 became the state-of-the-art object detection model in terms of computational speed at that moment.

YOLOv3 Architecture Implementation

As we have already discussed pretty much everything about the theory behind YOLOv3, we can now start implementing the architecture from scratch. In Codeblock 1 below, I import the torch module and its nn submodule. Here I also initialize the NUM_PRIORS and NUM_CLASS variables, in which these two correspond to the number of prior boxes within each grid cell and the number of object classes in the dataset, respectively.

# Codeblock 1

import torch

import torch.nn as nn

NUM_PRIORS = 3

NUM_CLASS = 80Convolutional Block Implementation

What I am going to implement first is the main building block of the network, which I refer to as the Convolutional block as seen in Codeblock 2. The structure of this block is quite a bit the same as the one used in YOLOv2, where it follows the Conv-BN-Leaky ReLU pattern. When we use this kind of structure, don’t forget to set the bias parameter of the conv layer to False (at line #(1)) because using bias term is somewhat useless if we directly place a batch normalization layer right after it. Here I also configure the padding of the conv layer such that it will automatically set to 1 whenever the kernel size is 3×3 or 0 whenever we use 1×1 kernel (#(2)). Next, as the conv, bn, and leaky_relu have been initialized, we can simply connect them all using the code written inside the forward() method (#(3)).

# Codeblock 2

class Convolutional(nn.Module):

def __init__(self,

in_channels,

out_channels,

kernel_size,

stride=1):

super().__init__()

self.conv = nn.Conv2d(in_channels=in_channels,

out_channels=out_channels,

kernel_size=kernel_size,

stride=stride,

bias=False, #(1)

padding=1 if kernel_size==3 else 0) #(2)

self.bn = nn.BatchNorm2d(num_features=out_channels)

self.leaky_relu = nn.LeakyReLU(negative_slope=0.1)

def forward(self, x): #(3)

print(f'originalt: {x.size()}')

x = self.conv(x)

print(f'after convt: {x.size()}')

x = self.bn(x)

print(f'after bnt: {x.size()}')

x = self.leaky_relu(x)

print(f'after leaky relu: {x.size()}')

return xWe just want to ensure that our main building block is working properly, we will test it by simulating the very first Convolutional block in Figure 1. Remember that since YOLOv3 takes an image of size 416×416 as the input, here in Codeblock 3 I create a dummy tensor of that shape to simulate an image passed through that layer. Also, note that here I leave the stride to the default (1) because at this point we don’t want to perform spatial downsampling.

# Codeblock 3

convolutional = Convolutional(in_channels=3,

out_channels=32,

kernel_size=3)

x = torch.randn(1, 3, 416, 416)

out = convolutional(x)# Codeblock 3 Output

original : torch.Size([1, 3, 416, 416])

after conv : torch.Size([1, 32, 416, 416])

after bn : torch.Size([1, 32, 416, 416])

after leaky relu : torch.Size([1, 32, 416, 416])Now let’s test our Convolutional block again, but this time I’ll set the stride to 2 to simulate the second convolutional block in the architecture. We can see in the output below that the spatial dimension halves from 416×416 to 208×208, indicating that this approach is a valid replacement for the maxpooling layers we previously had in YOLOv1 and YOLOv2.

# Codeblock 4

convolutional = Convolutional(in_channels=32,

out_channels=64,

kernel_size=3,

stride=2)

x = torch.randn(1, 32, 416, 416)

out = convolutional(x)# Codeblock 4 Output

original : torch.Size([1, 32, 416, 416])

after conv : torch.Size([1, 64, 208, 208])

after bn : torch.Size([1, 64, 208, 208])

after leaky relu : torch.Size([1, 64, 208, 208])Residual Block Implementation

As the Convolutional block is done, what I am going to do now is to implement the next building block: Residual. This block generally follows the structure I displayed back in Figure 3, where it consists of a residual connection that skips through two Convolutional blocks. Take a look at the Codeblock 5 below to see how I implement it.

The two convolution layers themselves follow the pattern in Figure 1, where the first Convolutional halves the number of channels (#(1)) which will then be doubled again by the second Convolutional (#(3)). Here you also need to note that the first convolution uses 1×1 kernel (#(2)) whereas the second one uses 3×3 (#(4)). Next, what we do inside the forward() method is simply connecting the two convolutions sequentially, which the final output is summed with the original input tensor (#(5)) before being returned.

# Codeblock 5

class Residual(nn.Module):

def __init__(self, num_channels):

super().__init__()

self.conv0 = Convolutional(in_channels=num_channels,

out_channels=num_channels//2, #(1)

kernel_size=1, #(2)

stride=1)

self.conv1 = Convolutional(in_channels=num_channels//2,

out_channels=num_channels, #(3)

kernel_size=3, #(4)

stride=1)

def forward(self, x):

original = x.clone()

print(f'originalt: {x.size()}')

x = self.conv0(x)

print(f'after conv0t: {x.size()}')

x = self.conv1(x)

print(f'after conv1t: {x.size()}')

x = x + original #(5)

print(f'after summationt: {x.size()}')

return xWe will now test the Residual block we just created using the Codeblock 6 below. Here I set the num_channels parameter to 64 because I want to simulate the very first residual block in the Darknet-53 architecture (see Figure 1).

# Codeblock 6

residual = Residual(num_channels=64)

x = torch.randn(1, 64, 208, 208)

out = residual(x)# Codeblock 6 Output

original : torch.Size([1, 64, 208, 208])

after conv0 : torch.Size([1, 32, 208, 208])

after conv1 : torch.Size([1, 64, 208, 208])

after summation : torch.Size([1, 64, 208, 208])If you take a closer look at the above output, you will notice that the shape of the input and output tensors are exactly the same. This essentially allows us to repeat multiple residual blocks easily. In the Codeblock 7 below I try to stack 4 residual blocks and pass a tensor through it, simulating the very last stack of residual blocks in the architecture.

# Codeblock 7

residuals = nn.ModuleList([])

for _ in range(4):

residual = Residual(num_channels=1024)

residuals.append(residual)

x = torch.randn(1, 1024, 13, 13)

for i in range(len(residuals)):

x = residuals[i](x)

print(f'after residuals #{i}t: {x.size()}')# Codeblock 7 Output

after residuals #0 : torch.Size([1, 1024, 13, 13])

after residuals #1 : torch.Size([1, 1024, 13, 13])

after residuals #2 : torch.Size([1, 1024, 13, 13])

after residuals #3 : torch.Size([1, 1024, 13, 13])Darknet-53 Implementation

Using the Convolutional and Residual building blocks we created earlier, we can now actually construct the Darknet-53 model. Everything I initialize inside the __init__() method below is based on the architecture in Figure 1. However, remember that we need to stop at the last residual block since we don’t need the global average pooling and the fully-connected layers. Not only that, at the lines marked with #(1) and #(2) I store the intermediate feature maps in separate variables (branch0 and branch1). We will later return these feature maps alongside the output from the main flow (x) (#(3)) to implement the branches that flow into the three detection heads.

# Codeblock 8

class Darknet53(nn.Module):

def __init__(self):

super().__init__()

self.convolutional0 = Convolutional(in_channels=3,

out_channels=32,

kernel_size=3)

self.convolutional1 = Convolutional(in_channels=32,

out_channels=64,

kernel_size=3,

stride=2)

self.residuals0 = nn.ModuleList([Residual(num_channels=64) for _ in range(1)])

self.convolutional2 = Convolutional(in_channels=64,

out_channels=128,

kernel_size=3,

stride=2)

self.residuals1 = nn.ModuleList([Residual(num_channels=128) for _ in range(2)])

self.convolutional3 = Convolutional(in_channels=128,

out_channels=256,

kernel_size=3,

stride=2)

self.residuals2 = nn.ModuleList([Residual(num_channels=256) for _ in range(8)])

self.convolutional4 = Convolutional(in_channels=256,

out_channels=512,

kernel_size=3,

stride=2)

self.residuals3 = nn.ModuleList([Residual(num_channels=512) for _ in range(8)])

self.convolutional5 = Convolutional(in_channels=512,

out_channels=1024,

kernel_size=3,

stride=2)

self.residuals4 = nn.ModuleList([Residual(num_channels=1024) for _ in range(4)])

def forward(self, x):

print(f'originaltt: {x.size()}n')

x = self.convolutional0(x)

print(f'after convolutional0t: {x.size()}')

x = self.convolutional1(x)

print(f'after convolutional1t: {x.size()}n')

for i in range(len(self.residuals0)):

x = self.residuals0[i](x)

print(f'after residuals0 #{i}t: {x.size()}')

x = self.convolutional2(x)

print(f'nafter convolutional2t: {x.size()}n')

for i in range(len(self.residuals1)):

x = self.residuals1[i](x)

print(f'after residuals1 #{i}t: {x.size()}')

x = self.convolutional3(x)

print(f'nafter convolutional3t: {x.size()}n')

for i in range(len(self.residuals2)):

x = self.residuals2[i](x)

print(f'after residuals2 #{i}t: {x.size()}')

branch0 = x.clone() #(1)

x = self.convolutional4(x)

print(f'nafter convolutional4t: {x.size()}n')

for i in range(len(self.residuals3)):

x = self.residuals3[i](x)

print(f'after residuals3 #{i}t: {x.size()}')

branch1 = x.clone() #(2)

x = self.convolutional5(x)

print(f'nafter convolutional5t: {x.size()}n')

for i in range(len(self.residuals4)):

x = self.residuals4[i](x)

print(f'after residuals4 #{i}t: {x.size()}')

return branch0, branch1, x #(3)Now we test our Darknet53 class by running the Codeblock 9 below. You can see in the resulting output that everything seems to work properly as the shape of the tensor correctly transforms according to the guide in Figure 1. One thing that I haven’t mentioned before is that this Darknet-53 architecture downscales the input image by a factor of 32. So, with this downsampling factor, an input image of shape 256×256 will become 8×8 in the end (as shown in Figure 1), whereas an input of shape 416×416 will result in a 13×13 prediction tensor.

# Codeblock 9

darknet53 = Darknet53()

x = torch.randn(1, 3, 416, 416)

out = darknet53(x)# Codeblock 9 Output

original : torch.Size([1, 3, 416, 416])

after convolutional0 : torch.Size([1, 32, 416, 416])

after convolutional1 : torch.Size([1, 64, 208, 208])

after residuals0 #0 : torch.Size([1, 64, 208, 208])

after convolutional2 : torch.Size([1, 128, 104, 104])

after residuals1 #0 : torch.Size([1, 128, 104, 104])

after residuals1 #1 : torch.Size([1, 128, 104, 104])

after convolutional3 : torch.Size([1, 256, 52, 52])

after residuals2 #0 : torch.Size([1, 256, 52, 52])

after residuals2 #1 : torch.Size([1, 256, 52, 52])

after residuals2 #2 : torch.Size([1, 256, 52, 52])

after residuals2 #3 : torch.Size([1, 256, 52, 52])

after residuals2 #4 : torch.Size([1, 256, 52, 52])

after residuals2 #5 : torch.Size([1, 256, 52, 52])

after residuals2 #6 : torch.Size([1, 256, 52, 52])

after residuals2 #7 : torch.Size([1, 256, 52, 52])

after convolutional4 : torch.Size([1, 512, 26, 26])

after residuals3 #0 : torch.Size([1, 512, 26, 26])

after residuals3 #1 : torch.Size([1, 512, 26, 26])

after residuals3 #2 : torch.Size([1, 512, 26, 26])

after residuals3 #3 : torch.Size([1, 512, 26, 26])

after residuals3 #4 : torch.Size([1, 512, 26, 26])

after residuals3 #5 : torch.Size([1, 512, 26, 26])

after residuals3 #6 : torch.Size([1, 512, 26, 26])

after residuals3 #7 : torch.Size([1, 512, 26, 26])

after convolutional5 : torch.Size([1, 1024, 13, 13])

after residuals4 #0 : torch.Size([1, 1024, 13, 13])

after residuals4 #1 : torch.Size([1, 1024, 13, 13])

after residuals4 #2 : torch.Size([1, 1024, 13, 13])

after residuals4 #3 : torch.Size([1, 1024, 13, 13])At this point we can also see what the outputs produced by the three branches look like simply by printing out the shapes of branch0, branch1, and x as shown in Codeblock 10 below. Notice that the spatial dimensions of these three tensors vary. Later on, the tensors from the deeper layers will be upsampled so that we can perform channel-wise concatenation with those from the shallower ones.

# Codeblock 10

print(out[0].shape) # branch0

print(out[1].shape) # branch1

print(out[2].shape) # x# Codeblock 10 Output

torch.Size([1, 256, 52, 52])

torch.Size([1, 512, 26, 26])

torch.Size([1, 1024, 13, 13])Detection Head Implementation

If you go back to Figure 4, you will notice that each of the detection heads consists of two convolution layers. However, these two convolutions are not identical. In Codeblock 11 below I use the Convolutional block for the first one and the plain nn.Conv2d for the second one. This is essentially done because the second convolution acts as the final layer, hence is responsible for giving raw output (instead of being normalized and ReLU-ed).

# Codeblock 11

class DetectionHead(nn.Module):

def __init__(self, num_channels):

super().__init__()

self.convhead0 = Convolutional(in_channels=num_channels,

out_channels=num_channels*2,

kernel_size=3)

self.convhead1 = nn.Conv2d(in_channels=num_channels*2,

out_channels=NUM_PRIORS*(NUM_CLASS+5),

kernel_size=1)

def forward(self, x):

print(f'originalt: {x.size()}')

x = self.convhead0(x)

print(f'after convhead0t: {x.size()}')

x = self.convhead1(x)

print(f'after convhead1t: {x.size()}')

return xNow in Codeblock 12 I’ll try to simulate the 13×13 detection head, hence I set the input feature map to have the shape of 512×13×13 (#(1)). By the way you’ll know where the number 512 comes from later in the subsequent section.

# Codeblock 12

detectionhead = DetectionHead(num_channels=512)

x = torch.randn(1, 512, 13, 13) #(1)

out = detectionhead(x)And below is what the resulting output looks like. We can see here that the tensor expands to 1024×13×13 before eventually shrink to 255×13×13. Remember that in YOLOv3 as long as we set NUM_PRIORS to 3 and NUM_CLASS to 80, the number of output channel will always be 255 regardless of the number of input channel fed into the DetectionHead.

# Codeblock 12 Output

original : torch.Size([1, 512, 13, 13])

after convhead0 : torch.Size([1, 1024, 13, 13])

after convhead1 : torch.Size([1, 255, 13, 13])The Entire YOLOv3 Architecture

Okay now — since we have initialized the main building blocks, what we need to do next is to construct the entire YOLOv3 architecture. Here I will also discuss the remaining components we haven’t covered. The code is quite long though, so I break it down into two codeblocks: Codeblock 13a and Codeblock 13b. Just ensure that these two codeblocks are written within the same notebook cell if you want to run it on your own.

In Codeblock 13a below, what we do first is to initialize the backbone model (#(1)). Next, we create a stack of 5 Convolutional blocks which alternately halves and doubles the number of channels. The conv block that reduces the channel count uses 1×1 kernel while the one that increases it uses 3×3 kernel, just like the structure we use in the Residual block. We initialize this stack of 5 convolutions for the three detection heads. Specifically for the feature maps that flow into the 26×26 and 52×52 heads, we need to initialize another convolution layer (#(2) and #(4)) and an upsampling layer (#(3) and #(5)) in addition to the 5 convolutions.

# Codeblock 13a

class YOLOv3(nn.Module):

def __init__(self):

super().__init__()

###############################################

# Backbone initialization.

self.darknet53 = Darknet53() #(1)

###############################################

# For 13x13 output.

self.conv0 = Convolutional(in_channels=1024, out_channels=512, kernel_size=1)

self.conv1 = Convolutional(in_channels=512, out_channels=1024, kernel_size=3)

self.conv2 = Convolutional(in_channels=1024, out_channels=512, kernel_size=1)

self.conv3 = Convolutional(in_channels=512, out_channels=1024, kernel_size=3)

self.conv4 = Convolutional(in_channels=1024, out_channels=512, kernel_size=1)

self.detection_head_large_obj = DetectionHead(num_channels=512)

###############################################

# For 26x26 output.

self.conv5 = Convolutional(in_channels=512, out_channels=256, kernel_size=1) #(2)

self.upsample0 = nn.Upsample(scale_factor=2) #(3)

self.conv6 = Convolutional(in_channels=768, out_channels=256, kernel_size=1)

self.conv7 = Convolutional(in_channels=256, out_channels=512, kernel_size=3)

self.conv8 = Convolutional(in_channels=512, out_channels=256, kernel_size=1)

self.conv9 = Convolutional(in_channels=256, out_channels=512, kernel_size=3)

self.conv10 = Convolutional(in_channels=512, out_channels=256, kernel_size=1)

self.detection_head_medium_obj = DetectionHead(num_channels=256)

###############################################

# For 52x52 output.

self.conv11 = Convolutional(in_channels=256, out_channels=128, kernel_size=1) #(4)

self.upsample1 = nn.Upsample(scale_factor=2) #(5)

self.conv12 = Convolutional(in_channels=384, out_channels=128, kernel_size=1)

self.conv13 = Convolutional(in_channels=128, out_channels=256, kernel_size=3)

self.conv14 = Convolutional(in_channels=256, out_channels=128, kernel_size=1)

self.conv15 = Convolutional(in_channels=128, out_channels=256, kernel_size=3)

self.conv16 = Convolutional(in_channels=256, out_channels=128, kernel_size=1)

self.detection_head_small_obj = DetectionHead(num_channels=128)Now in Codeblock 13b we define the flow of the network inside the forward() method. Here we first pass the input tensor through the darknet53 model (#(1)), which produces 3 output tensors: branch0, branch1, and x. Then, what we do next is to connect the layers one after another according to the flow given in Figure 4.

# Codeblock 13b

def forward(self, x):

###############################################

# Backbone.

branch0, branch1, x = self.darknet53(x) #(1)

print(f'branch0ttt: {branch0.size()}')

print(f'branch1ttt: {branch1.size()}')

print(f'xttt: {x.size()}n')

###############################################

# Flow to 13x13 detection head.

x = self.conv0(x)

print(f'after conv0tt: {x.size()}')

x = self.conv1(x)

print(f'after conv1tt: {x.size()}')

x = self.conv2(x)

print(f'after conv2tt: {x.size()}')

x = self.conv3(x)

print(f'after conv3tt: {x.size()}')

x = self.conv4(x)

print(f'after conv4tt: {x.size()}')

large_obj = self.detection_head_large_obj(x)

print(f'large object detectiont: {large_obj.size()}n')

###############################################

# Flow to 26x26 detection head.

x = self.conv5(x)

print(f'after conv5tt: {x.size()}')

x = self.upsample0(x)

print(f'after upsample0tt: {x.size()}')

x = torch.cat([x, branch1], dim=1)

print(f'after concatenatet: {x.size()}')

x = self.conv6(x)

print(f'after conv6tt: {x.size()}')

x = self.conv7(x)

print(f'after conv7tt: {x.size()}')

x = self.conv8(x)

print(f'after conv8tt: {x.size()}')

x = self.conv9(x)

print(f'after conv9tt: {x.size()}')

x = self.conv10(x)

print(f'after conv10tt: {x.size()}')

medium_obj = self.detection_head_medium_obj(x)

print(f'medium object detectiont: {medium_obj.size()}n')

###############################################

# Flow to 52x52 detection head.

x = self.conv11(x)

print(f'after conv11tt: {x.size()}')

x = self.upsample1(x)

print(f'after upsample1tt: {x.size()}')

x = torch.cat([x, branch0], dim=1)

print(f'after concatenatet: {x.size()}')

x = self.conv12(x)

print(f'after conv12tt: {x.size()}')

x = self.conv13(x)

print(f'after conv13tt: {x.size()}')

x = self.conv14(x)

print(f'after conv14tt: {x.size()}')

x = self.conv15(x)

print(f'after conv15tt: {x.size()}')

x = self.conv16(x)

print(f'after conv16tt: {x.size()}')

small_obj = self.detection_head_small_obj(x)

print(f'small object detectiont: {small_obj.size()}n')

###############################################

# Return prediction tensors.

return large_obj, medium_obj, small_objAs we have completed the forward() method, we can now test the entire YOLOv3 model by passing a single RGB image of size 416×416 as shown in Codeblock 14.

# Codeblock 14

yolov3 = YOLOv3()

x = torch.randn(1, 3, 416, 416)

out = yolov3(x)Below is what the output looks like after you run the codeblock above. Here we can see that everything seems to work properly as the dummy image successfully passed through all layers in the network. One thing that you might probably need to know is that the 768-channel feature map at line #(4) is obtained from the concatenation between the tensor at lines #(2) and #(3). The similar thing also applies to the 384-channel tensor at line #(6), in which it is the concatenation between the feature maps at lines #(1) and #(5).

# Codeblock 14 Output

branch0 : torch.Size([1, 256, 52, 52]) #(1)

branch1 : torch.Size([1, 512, 26, 26]) #(2)

x : torch.Size([1, 1024, 13, 13])

after conv0 : torch.Size([1, 512, 13, 13])

after conv1 : torch.Size([1, 1024, 13, 13])

after conv2 : torch.Size([1, 512, 13, 13])

after conv3 : torch.Size([1, 1024, 13, 13])

after conv4 : torch.Size([1, 512, 13, 13])

large object detection : torch.Size([1, 255, 13, 13])

after conv5 : torch.Size([1, 256, 13, 13])

after upsample0 : torch.Size([1, 256, 26, 26]) #(3)

after concatenate : torch.Size([1, 768, 26, 26]) #(4)

after conv6 : torch.Size([1, 256, 26, 26])

after conv7 : torch.Size([1, 512, 26, 26])

after conv8 : torch.Size([1, 256, 26, 26])

after conv9 : torch.Size([1, 512, 26, 26])

after conv10 : torch.Size([1, 256, 26, 26])

medium object detection : torch.Size([1, 255, 26, 26])

after conv11 : torch.Size([1, 128, 26, 26])

after upsample1 : torch.Size([1, 128, 52, 52]) #(5)

after concatenate : torch.Size([1, 384, 52, 52]) #(6)

after conv12 : torch.Size([1, 128, 52, 52])

after conv13 : torch.Size([1, 256, 52, 52])

after conv14 : torch.Size([1, 128, 52, 52])

after conv15 : torch.Size([1, 256, 52, 52])

after conv16 : torch.Size([1, 128, 52, 52])

small object detection : torch.Size([1, 255, 52, 52])And just to make things clearer here I also print out the output of each detection head in Codeblock 15 below. We can see here that all the resulting prediction tensors have the shape that we expected earlier. Thus, I believe our YOLOv3 implementation is correct and hence ready to train.

# Codeblock 15

print(out[0].shape)

print(out[1].shape)

print(out[2].shape)# Codeblock 15 Output

torch.Size([1, 255, 13, 13])

torch.Size([1, 255, 26, 26])

torch.Size([1, 255, 52, 52])I think that’s pretty much everything about YOLOv3 and its architecture implementation from scratch. As we’ve seen above, the authors successfully made an impressive improvement in performance compared to the previous YOLO version, even though the changes they made to the system were not what they considered significant — hence the title “An Incremental Improvement.”

Please let me know if you spot any mistake in this article. You can also find the fully-working code in my GitHub repo [13]. Thanks for reading, see ya in my next article!

References

[1] Joseph Redmon and Ali Farhadi. YOLOv3: An Incremental Improvement. Arxiv. https://arxiv.org/abs/1804.02767 [Accessed August 24, 2025].

[2] Muhammad Ardi. YOLOv1 Paper Walkthrough: The Day YOLO First Saw the World. Medium. https://ai.gopubby.com/yolov1-paper-walkthrough-the-day-yolo-first-saw-the-world-ccff8b60d84b [Accessed March 1, 2026].

[3] Muhammad Ardi. YOLOv1 Loss Function Walkthrough: Regression for All. Medium. https://ai.gopubby.com/yolov1-loss-function-walkthrough-regression-for-all-18c34be6d7cb [Accessed March 1, 2026].

[4] Muhammad Ardi. YOLOv2 & YOLO9000 Paper Walkthrough: Better, Faster, Stronger. Towards Data Science. https://towardsdatascience.com/yolov2-yolo9000-paper-walkthrough-better-faster-stronger/ [Accessed March 1, 2026].

[5] Image originally created by author.

[6] YOLO v3 introduction to object detection with TensorFlow 2. PyLessons. https://pylessons.com/YOLOv3-TF2-introduction [Accessed August 24, 2025].

[7] aladdinpersson. YOLOv3. GitHub. https://github.com/aladdinpersson/Machine-Learning-Collection/blob/master/ML/Pytorch/object_detection/YOLOv3/model.py [Accessed August 24, 2025].

[8] Kaiming He et al. Deep Residual Learning for Image Recognition. Arxiv. https://arxiv.org/abs/1512.03385 [Accessed August 24, 2025].

[9] Langcai Cao et al. A Text Detection Algorithm for Image of Student Exercises Based on CTPN and Enhanced YOLOv3. IEEE Access. https://ieeexplore.ieee.org/document/9200481 [Accessed August 24, 2025].

[10] Image by author, partially generated by Gemini.

[11] Joseph Redmon et al. You Only Look Once: Unified, Real-Time Object Detection. Arxiv. https://arxiv.org/pdf/1506.02640 [Accessed July 5, 2025].

[12] ML For Nerds. YOLO-V3: An Incremental Improvement || YOLO OBJECT DETECTION SERIES. YouTube. https://www.youtube.com/watch?v=9fhAbvPWzKs&t=174s [Accessed August 24, 2025].

[13] MuhammadArdiPutra. Even Better, but Not That Much — YOLOv3. GitHub. https://github.com/MuhammadArdiPutra/medium_articles/blob/main/Even%20Better%2C%20but%20Not%20That%20Much%20-%20YOLOv3.ipynb [Accessed August 24, 2025].